👨🎓 About Me

I am a 3rd-year PhD student at King’s College London. I am fortunate to be supervised by Lecturer Lin Gui and Professor Yulan He. My current research focuses on Large Language Model Reasoning in Natural Language Processing (NLP).

I completed both my Bachelor’s and Master’s degrees in Computer Science and Technology at Harbin Institute of Technology (Shenzhen), under the guidance of Professor Ruifeng Xu. During my master’s studies, I engaged in research focused on Stance Detection and Argument Mining.

Besides research, I have interned at Tencent Music Entertainment for nine months and Shopee for four months, and worked at Baidu for five months as a Natural Language Processing Algorithm Engineer. I am currently interning at Microsoft Research Asia

🔥 News

- 2026.05: 🎉 4 papers accepted to ICML 2026

- 2026.04: 🎉 1 paper accepted to ACL 2026 (Demo Track)

- 2025.11: 🎉 1 paper accepted to AAAI 2026 (Oral🌟)

- 2025.08: 🎉 1 paper accepted to EMNLP 2025

- 2025.05: 🧑💻 Started internship at Microsoft Research Asia (MSRA)

- 2025.05: 🎉 1 paper accepted to ACL 2025

- 2025.05: 🎉 1 paper accepted to ICML 2025 (Spotlight🌟)

- 2024.11: 💬 Invited talk: “Complex Reasoning in Narratives with LLMs”, Beijing Foreign Studies University

- 2024.05: 🎉 2 papers accepted to ACL 2024

- 2023.10: 🚀 Started Ph.D. at King’s College London (KCL)

- 2023.08: 🧑💻 NLP Algorithm Engineer at Baidu

completed (5 months)

- 2023.03: 🎓 Obtained Master’s degree from Harbin Institute of Technology, Shenzhen

- 2022.09: 🧑💻 Internship at Shopee

completed (4 months)

- 2022.04: 🧑💻 Internship at Tencent Music Entertainment Group

completed (9 months)

- 2022.03: 🎉 1 paper accepted to SIGIR 2022

- 2022.02: 🎉 2 papers accepted to ACL 2022

📝 Publications

(* Equal contribution, † Corresponding author)

🤔 LLM Reasoning, Decoding & Code Intelligence

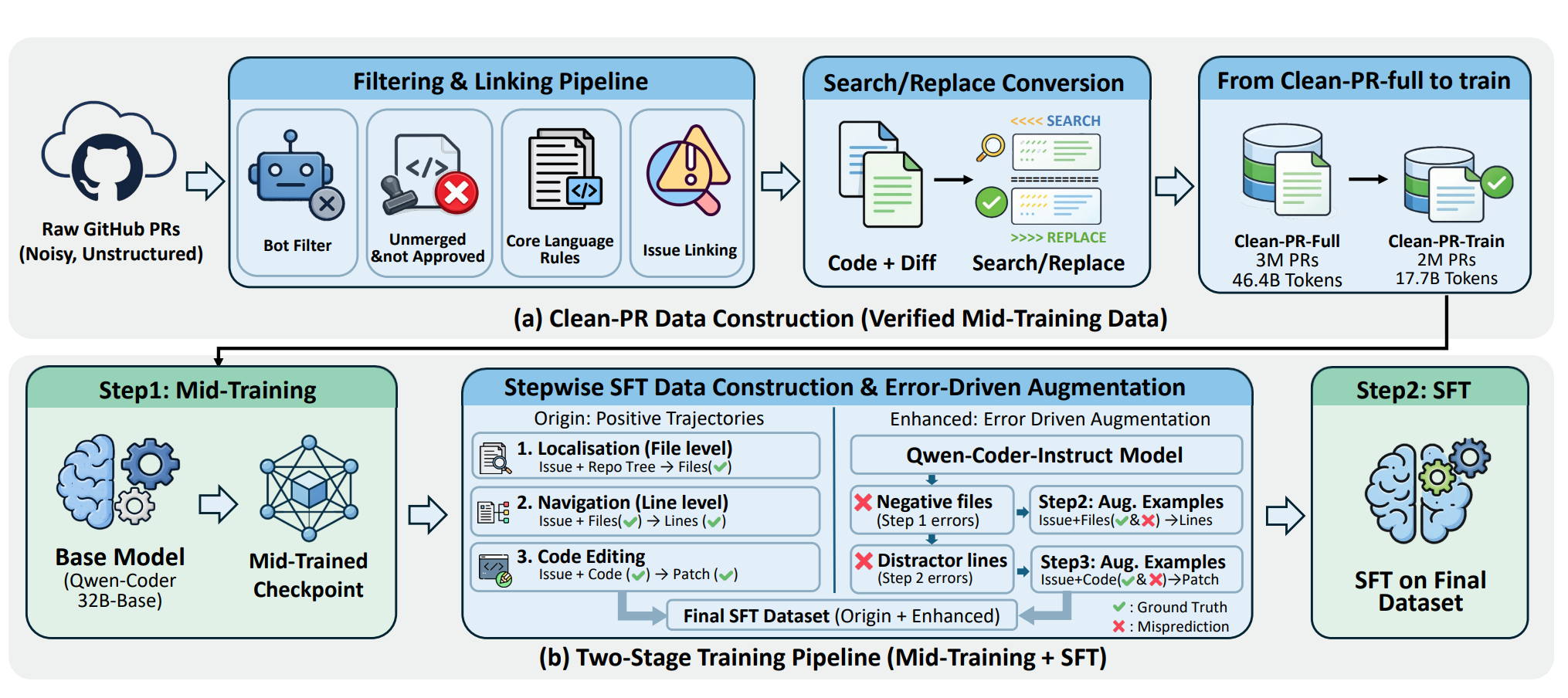

Pull Requests as a Training Signal for Repo-Level Code Editing

Qinglin Zhu, Tianyu Chen, Shuai Lu, Lei Ji, Runcong Zhao, Murong Ma, Xiangxiang Dai, Yulan He, Lin Gui, Peng Cheng, Yeyun Gong.

- Introduces Clean-PR, a pipeline that converts noisy PR diffs into structured edit blocks, yielding 2M training samples across 12 languages.

- Achieves +13.6% on SWE-bench Lite and +12.3% on SWE-bench Verified, demonstrating the value of real-world PRs for repo-level code editing.

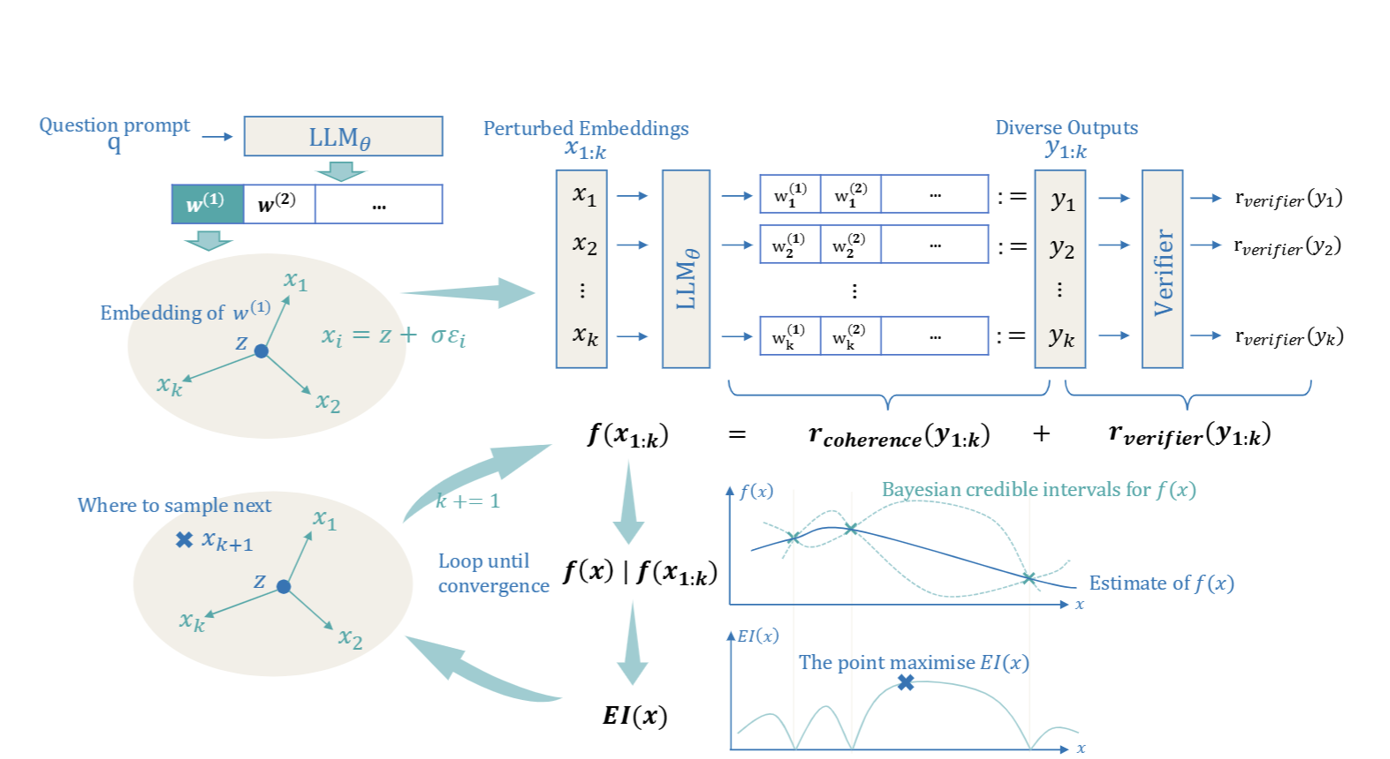

Soft Reasoning: Navigating Solution Spaces in Large Language Models through Controlled Embedding Exploration

Qinglin Zhu, Runcong Zhao, Hanqi Yan, Yulan He, Yudong Chen, Lin Gui.

- Proposes an embedding-based search framework that guides LLM generation by optimising the first token’s embedding.

- Combines embedding perturbation for controlled exploration with Bayesian optimisation via a verifier-guided objective, balancing exploration and exploitation.

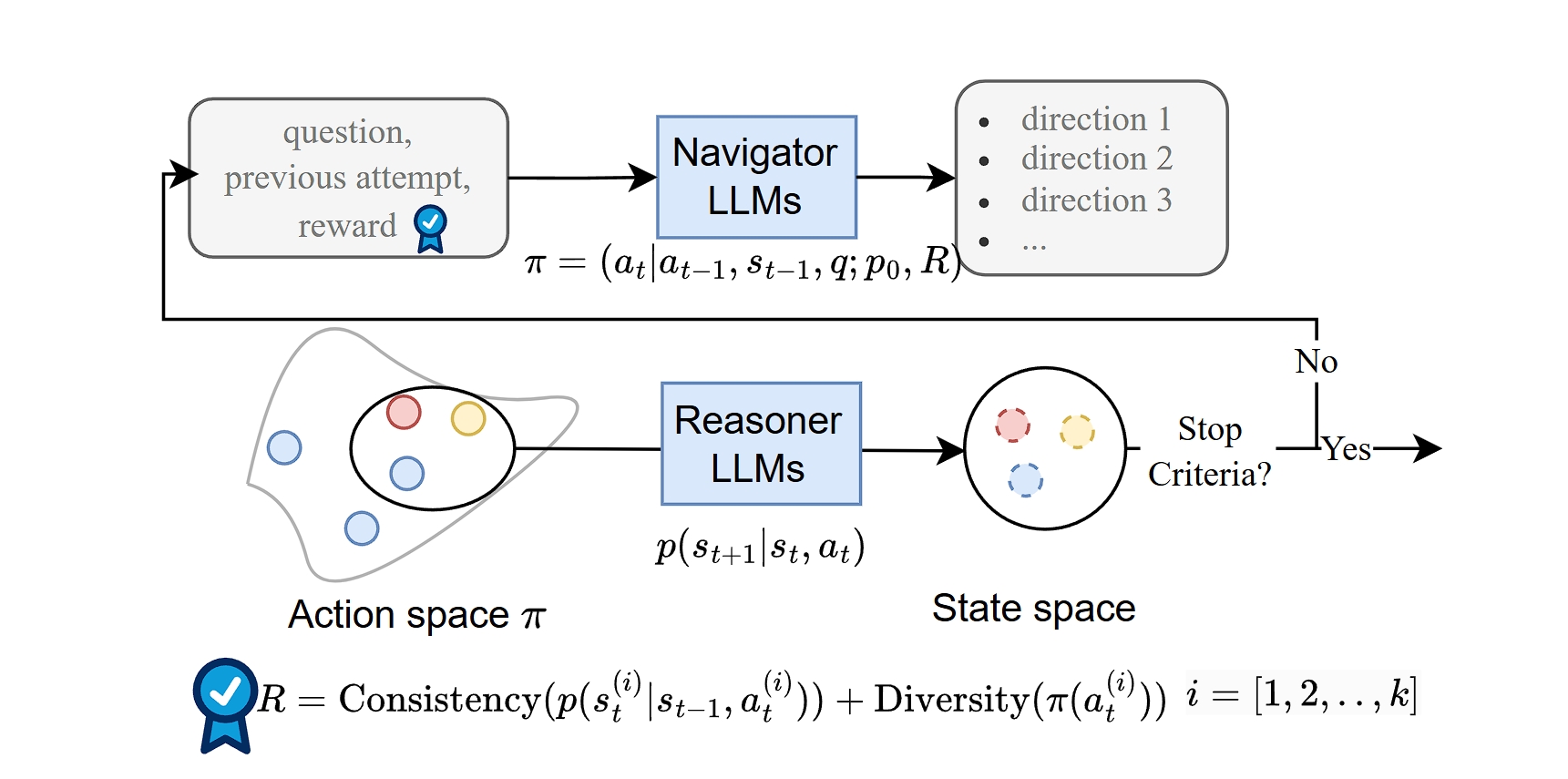

Mirror: A Multiple-perspective Self-Reflection Method for Knowledge-rich Reasoning

Hanqi Yan*, Qinglin Zhu* , Xinyu Wang, Lin Gui, Yulan He.

- Proposes Mirror, enabling LLMs to reflect from multiple perspectives via Navigator–Reasoner cooperation.

- Encourages both diverse and consistent reasoning to overcome self-reflection traps.

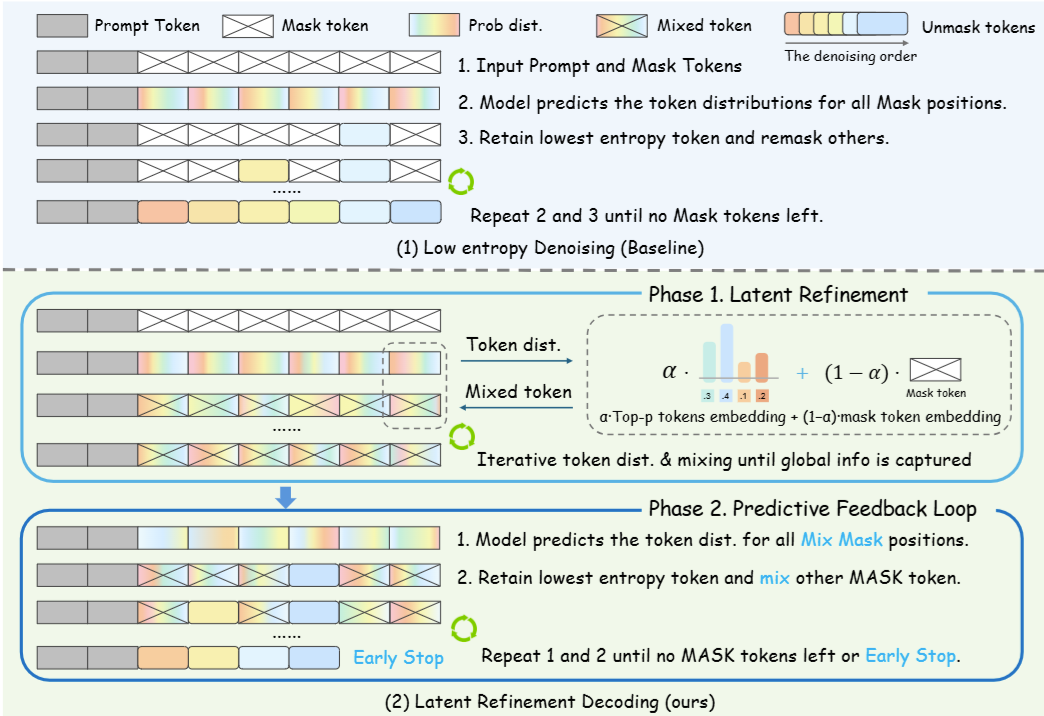

Latent Refinement Decoding: Enhancing Diffusion-Based Language Models by Refining Belief States

Qinglin Zhu, Yizhen Yao, Runcong Zhao, Yanzheng Xiang, Amrutha Saseendran, Chen Jin, Philip Alexander Teare, Bin Liang, Yulan He, Lin Gui.

- Proposes Latent Refinement Decoding (LRD), a two-stage framework that tackles information loss and premature commitment in diffusion-based language models via latent refinement and predictive feedback.

- Enables faster, globally consistent parallel generation as a principled alternative to autoregressive decoding.

ICML-2026Stop the Flip-Flop: Context-Preserving Verification for Fast Revocable Diffusion Decoding

Yanzheng Xiang, Lan Wei, Yizhen Yao, Qinglin Zhu, Hanqi Yan, et al.ICML-2026Linear Ensembles Wash Away Watermarks: On the Fragility of Distributional Perturbations in LLMs

Zhihao Wu, Gracia Gong, Qinglin Zhu, Yudong Chen, Runcong Zhao.ICML-2026Detecting Contextual Hallucinations in LLMs with Frequency-Aware Attention

Siya Qi, Yudong Chen, Runcong Zhao, Qinglin Zhu, Zhanghao Hu, et al.EMNLP-2025Sparse Activation Editing for Reliable Instruction Following in Narratives

Runcong Zhao, Chengyu Cao, Qinglin Zhu, et al.PreprintSynthesizing File-Level Data for Unit Test Generation with Chain-of-Thoughts via Self-Debugging

Ziyue Hua, Tianyu Chen, Yeyun Gong, Shuai Lu, Peng Cheng, Qinglin Zhu, et al.

📚 Narrative Understanding

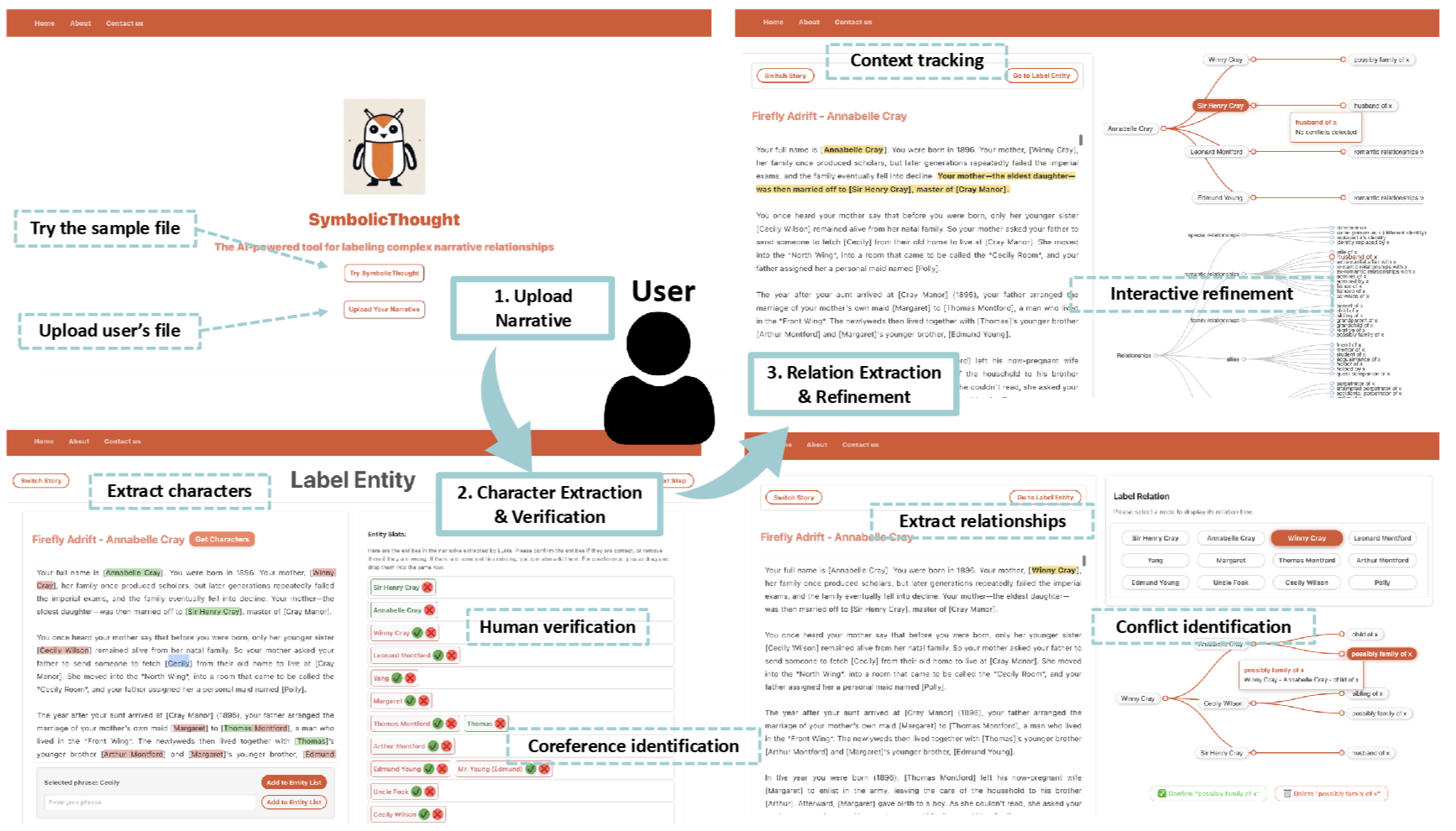

SymbolicThought: Integrating Language Models and Symbolic Reasoning for Consistent and Interpretable Human Relationship Understanding

Runcong Zhao, Qinglin Zhu*, Hainiu Xu, Bin Liang, Yulan He, Lin Gui.

- Proposes SymbolicThought, a human-in-the-loop system combining LLM extraction and symbolic reasoning for character relationship understanding.

- Supports editable relationship graphs, logical constraints, and interactive conflict resolution.

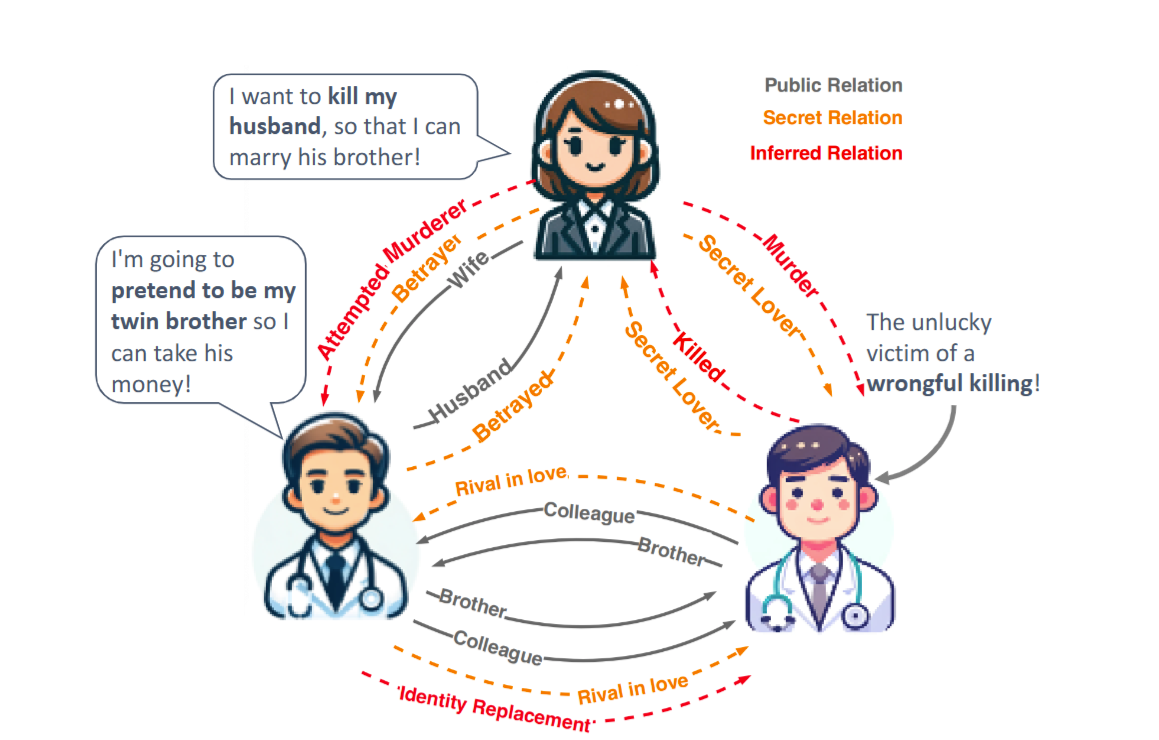

Large Language Models Fall Short: Understanding Complex Relationships in Detective Narratives

Runcong Zhao*, Qinglin Zhu* , Hainiu Xu, Jiazheng Li, Yuxiang Zhou, Yulan He, Lin Gui.

- Existing datasets for narrative understanding often fail to represent the complexity and uncertainty of relationships in real-life social scenarios.

- To address this gap, we introduce a new benchmark, Conan, designed for extracting and analysing intricate character relation graphs from detective narratives.

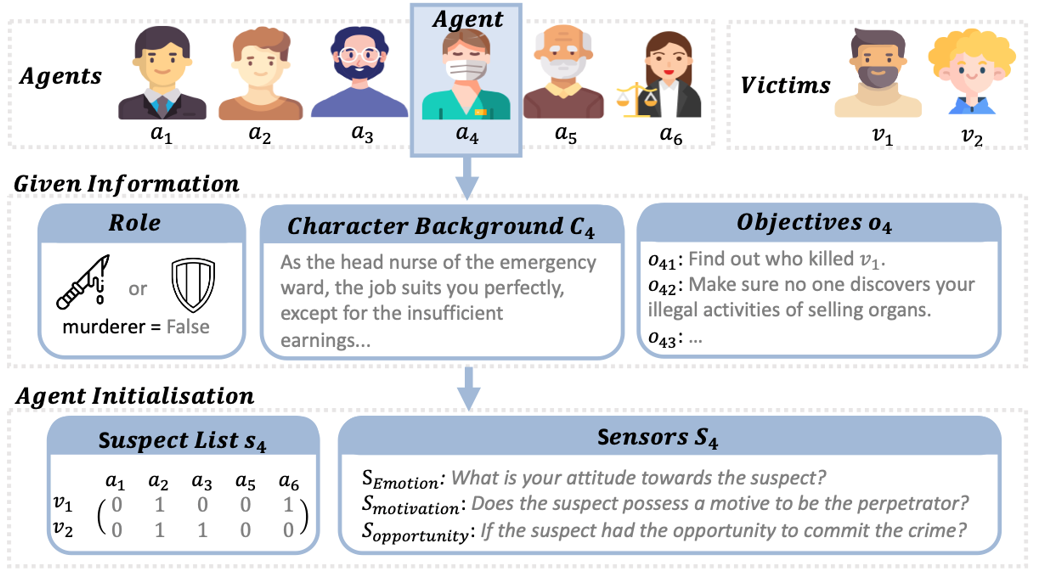

PLAYER*: Enhancing LLM-based Multi-Agent Communication and Interaction in Murder Mystery Games

Qinglin Zhu, Runcong Zhao, Jinhua Du, Lin Gui, Yulan He.

- We propose PLAYER*, a novel framework for Murder Mystery Games (剧本杀) using an anytime sampling-based planner and a questioning-driven search framework.

🔍 Retrieval and Memory for LLMs

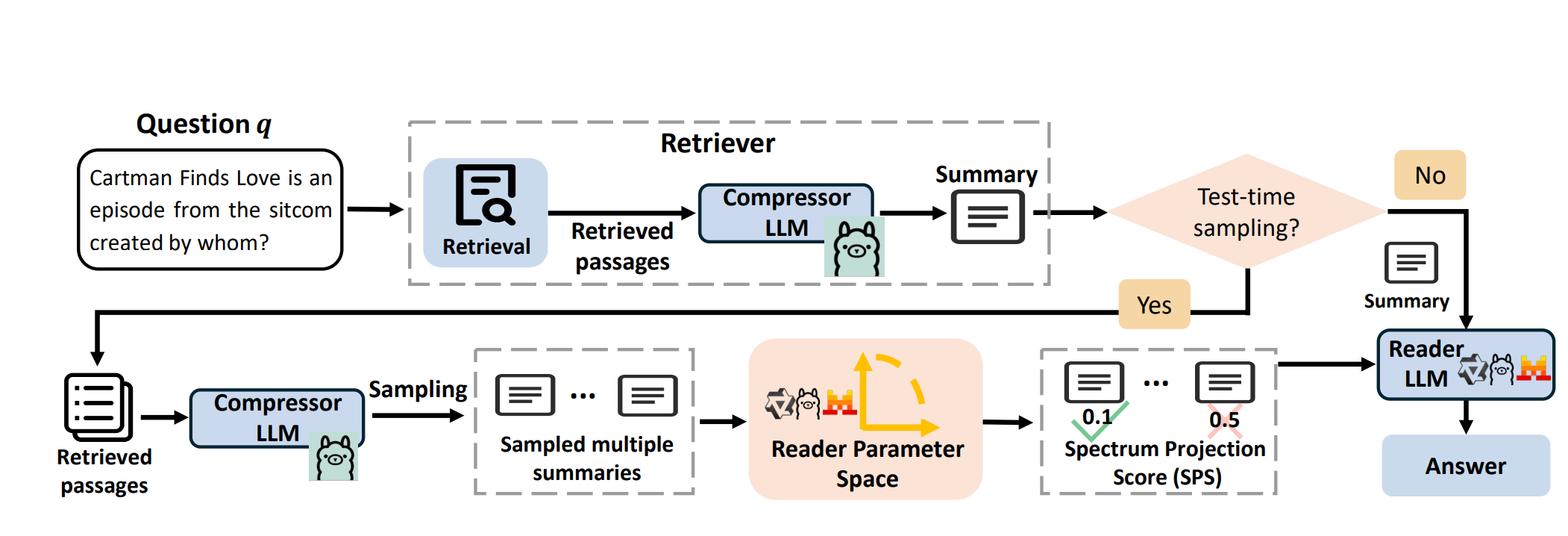

Spectrum Projection Score: Aligning Retrieved Summaries with Reader Models in Retrieval-Augmented Generation

Zhanghao Hu, Qinglin Zhu, Siya Qi, Yulan He, Hanqi Yan, Lin Gui.

- Proposes SPS, a supervision-free metric to assess semantic alignment between retrieved summaries and LLM representations.

- Introduces xCompress, an inference-time controller that ranks and compresses retrievals to improve generation and clarify retrieval–generation interaction.

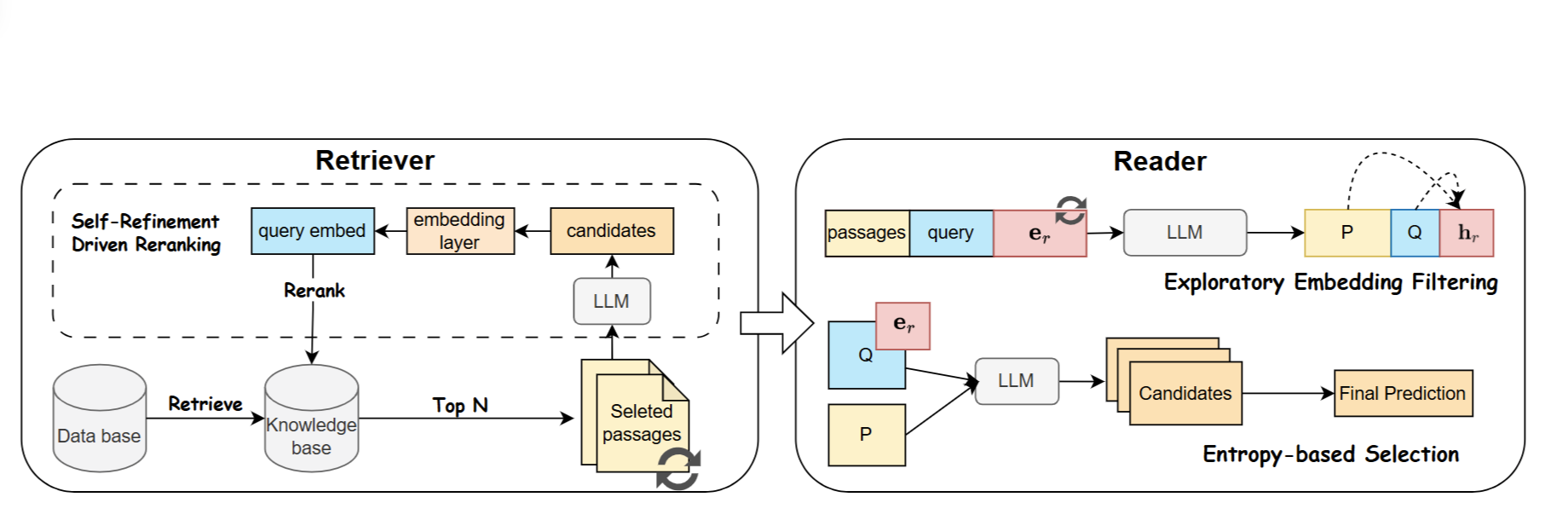

Beyond Prompting: An Efficient Embedding Framework for Open-Domain Question Answering

Zhanghao Hu, Hanqi Yan, Qinglin Zhu†, Zhenyi Shen, Yulan He, Lin Gui.

- Proposes EmbQA, an embedding-level framework for open-domain QA that optimizes retrieval with unsupervised contrastive

learning and improves answer diversity via exploratory embeddings.

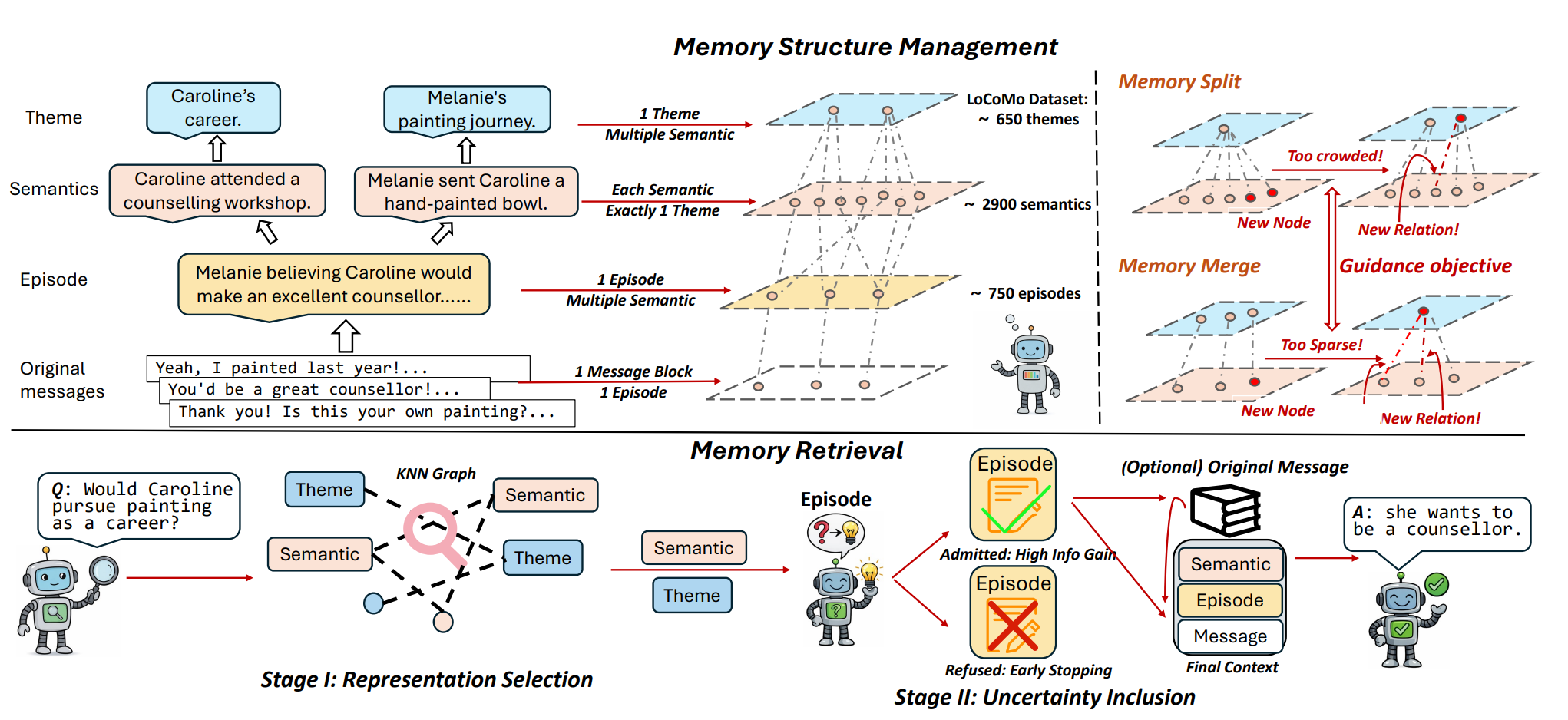

Beyond RAG for Agent Memory: Retrieval by Decoupling and Aggregation

Zhanghao Hu*, Qinglin Zhu*, Hanqi Yan, Yulan He, Lin Gui.

- Proposes xMemory, which decouples agent memories into semantic components and organises them hierarchically.

- Retrieves via top-down aggregation to capture diverse themes, outperforming standard RAG on long-horizon agent tasks.

PreprintBeyond Static Cropping: Layer-Adaptive Visual Localization and Decoding Enhancement

Zipeng Zhu, Zhanghao Hu, Qinglin Zhu, et al.

😆 Sentiment Analysis and Stance Detection

ACL-2022JointCL: A Joint Contrastive Learning Framework for Zero-Shot Stance Detection

Bin Liang*, Qinglin Zhu* , Xiang Li, Min Yang, Lin Gui, Yulan He, and Ruifeng Xu.SIGIR-2022Enhancing Zero-Shot Stance Detection via Targeted Background Knowledge

Qinglin Zhu, Bin Liang, Jingyi Sun, Jiachen Du, Lanjun Zhou, and Ruifeng Xu.CCL-2020Attention-based Recurrent Network Combined with Financial Lexicon for Aspect-level Sentiment Classification

Qinglin Zhu, Bin Liang, Liuyu Han, Yi Chen, Ruifeng Xu, and Ruibin Mao.LREC-2020Target-based sentiment annotation in Chinese financial news

Chaofa Yuan, Yuhan Liu, Rongdi Yin, Jun Zhang, Qinglin Zhu, Ruibin Mao, Ruifeng Xu.

🗣️ Argumentation Mining and Sequence Labeling

ACL-2022Have my arguments been replied to? Argument Pair Extraction as Machine Reading Comprehension

Jianzhu Bao, Jingyi Sun, Qinglin Zhu, and Ruifeng Xu.SemEval-2021HITSZ-HLT at SemEval-2021 Task 5: Ensemble Sequence Labeling and Span Boundary Detection for Toxic Span Detection

Qinglin Zhu, Zijie Lin, Yice Zhang, Jingyi Sun, Xiang Li, Qihui Lin, Yixue Dang, and Ruifeng Xu.NLPCC-2021A Hierarchical Sequence Labeling Model for Argument Pair Extraction

Jingyi Sun, Qinglin Zhu, Jianzhu Bao, Jipeng Wu, Caihua Yang, Rui Wang, and Ruifeng Xu.

🏅 Honors and Awards

- 2023.03 Outstanding Master’s Graduate & Outstanding Dissertation for Master’s Degree, HIT (3%)

- 2023.03 Sailvan Times Scholarship (2%), Harbin Institute of Technology

- 2021.10 National Scholarship (for Graduate Student, 2%)

- 2021.10 Pacemaker to Merit Student (2%), Harbin Institute of Technology

- 2021.03 NLPCC-2021 Shared Task: Argument Pair Extraction (Top 1)

- 2021.02 SemEval-2021 Task 5 Competition: Toxic Spans Detection (Top 1, Team Leader)

- 2020.06 Outstanding Bachelor’s Graduate & Outstanding Dissertation for Bachelor’s Degree, HIT (3%)

- 2019.10 National Scholarship (for Undergraduate Student, 2%)

📖 Educations

- 2023.10 - now, Ph.D., King’s College London, London, UK.

- 2020.09 - 2023.03, Master, Harbin Institute of Technology (Shenzhen), Shenzhen, China.

- 2016.09 - 2020.06, Bachelor, Harbin Institute of Technology (Shenzhen), Shenzhen, China.

💻 Works and Internships

- 2025.05 - Present, Microsoft Research Asia

, Beijing, China.(Internship)

- 2023.04 - 2023.08, Baidu

, Shanghai, China.

- 2022.06 - 2022.09, Shopee

, Shanghai, China.(Internship)

- 2021.08 - 2022.04, Tencent Music Entertainment Group

, Shenzhen, China.(Internship)

🫡 Service

- Reviewer: ICLR, ICML, ACL, AAAI, EMNLP, NAACL, NLPCC.

🗺️ Visitor Map

Last Updated:

May 1, 2026